Merge pull request #38 from QURIresearch/rescript-refactor

Rescript refactor

19

.github/workflows/lang-jest.yml

vendored

Normal file

|

|

@ -0,0 +1,19 @@

|

|||

name: Squiggle Lang Jest Tests

|

||||

|

||||

on: [push]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

working-directory: packages/squiggle-lang

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Install Packages

|

||||

run: yarn

|

||||

- name: Build rescript

|

||||

run: yarn run build

|

||||

- name: Run tests

|

||||

run: yarn test

|

||||

19

.gitignore

vendored

|

|

@ -1,16 +1,5 @@

|

|||

.DS_Store

|

||||

.merlin

|

||||

.bsb.lock

|

||||

npm-debug.log

|

||||

/node_modules/

|

||||

.cache

|

||||

.cache/*

|

||||

dist

|

||||

lib/*

|

||||

*.cache

|

||||

build

|

||||

node_modules

|

||||

yarn-error.log

|

||||

*.bs.js

|

||||

# Local Netlify folder

|

||||

.netlify

|

||||

.idea

|

||||

.cache

|

||||

.merlin

|

||||

.parcel-cache

|

||||

|

|

|

|||

35

README.md

|

|

@ -2,18 +2,31 @@

|

|||

|

||||

This is an experiment DSL/language for making probabilistic estimates.

|

||||

|

||||

## DistPlus

|

||||

We have a custom library called DistPlus to handle distributions with additional metadata. This helps handle mixed distributions (continuous + discrete), a cache for a cdf, possible unit types (specific times are supported), and limited domains.

|

||||

This monorepo has several packages that can be used for various purposes. All

|

||||

the packages can be found in `packages`.

|

||||

|

||||

## Running

|

||||

`@squiggle/lang` in `packages/squiggle-lang` contains the core language, particularly

|

||||

an interface to parse squiggle expressions and return descriptions of distributions

|

||||

or results.

|

||||

|

||||

Currently it only has a few very simple models.

|

||||

`@squiggle/components` in `packages/components` contains React components that

|

||||

can be passed squiggle strings as props, and return a presentation of the result

|

||||

of the calculation.

|

||||

|

||||

```

|

||||

yarn

|

||||

yarn run start

|

||||

yarn run parcel

|

||||

```

|

||||

`@squiggle/playground` in `packages/playground` contains a website for a playground

|

||||

for squiggle. This website is hosted at `playground.squiggle-language.com`

|

||||

|

||||

`@squiggle/website` in `packages/website` The main descriptive website for squiggle,

|

||||

it is hosted at `squiggle-language.com`.

|

||||

|

||||

The playground depends on the components library which then depends on the language.

|

||||

This means that if you wish to work on the components library, you will need

|

||||

to package the language, and for the playground to work, you will need to package

|

||||

the components library and the playground.

|

||||

|

||||

Scripts are available for you in the root directory to do important activities,

|

||||

such as:

|

||||

|

||||

`yarn build:lang`. Builds and packages the language

|

||||

`yarn storybook:components`. Hosts the component storybook

|

||||

|

||||

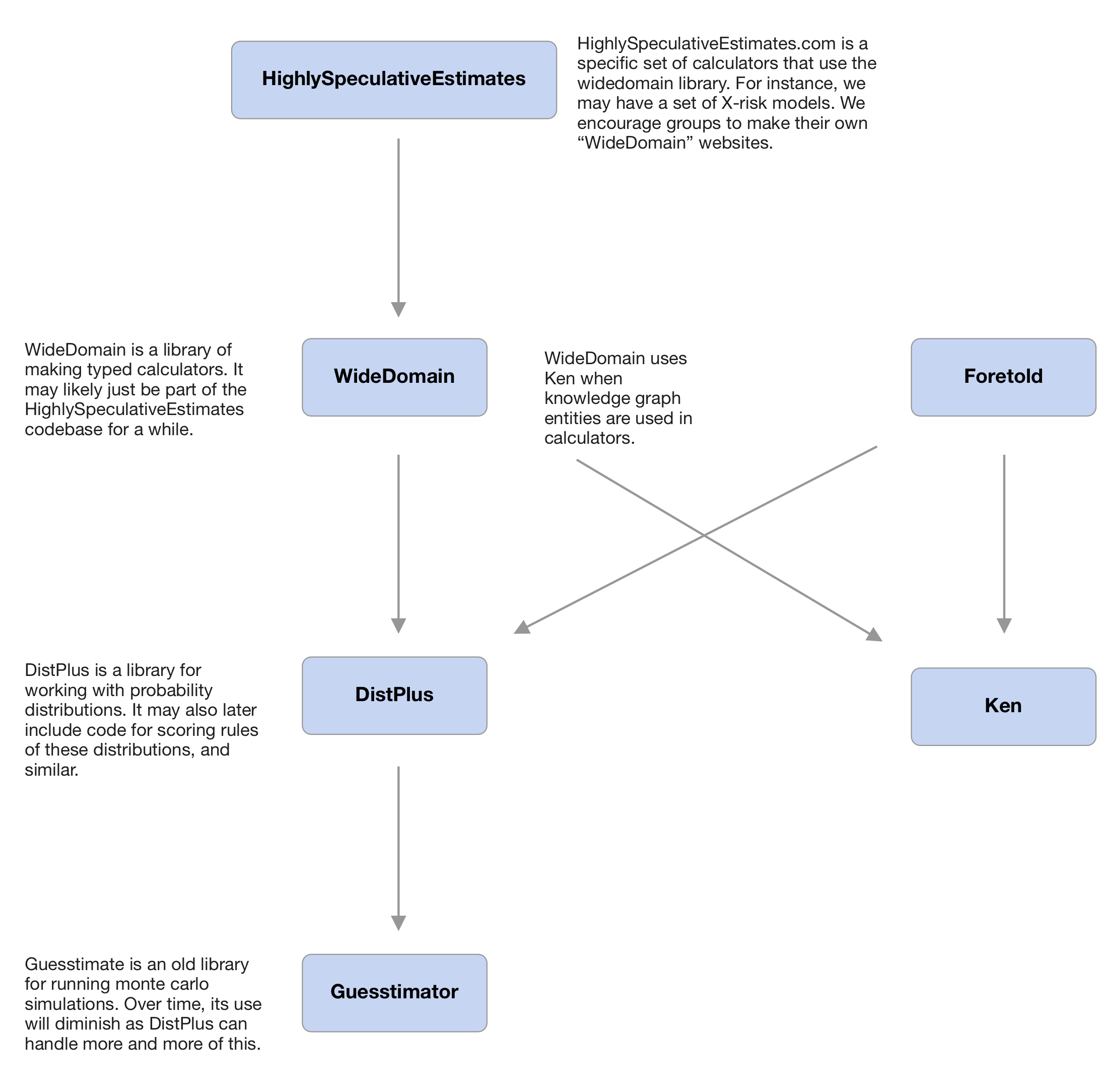

## Expected future setup

|

||||

|

||||

|

|

|

|||

|

Before Width: | Height: | Size: 347 KiB |

|

|

@ -1,13 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

describe("Bandwidth", () => {

|

||||

test("nrd0()", () => {

|

||||

let data = [|1., 4., 3., 2.|];

|

||||

expect(Bandwidth.nrd0(data)) |> toEqual(0.7625801874014622);

|

||||

});

|

||||

test("nrd()", () => {

|

||||

let data = [|1., 4., 3., 2.|];

|

||||

expect(Bandwidth.nrd(data)) |> toEqual(0.8981499984950554);

|

||||

});

|

||||

});

|

||||

|

|

@ -1,415 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

let shape: DistTypes.xyShape = {xs: [|1., 4., 8.|], ys: [|8., 9., 2.|]};

|

||||

|

||||

// let makeTest = (~only=false, str, item1, item2) =>

|

||||

// only

|

||||

// ? Only.test(str, () =>

|

||||

// expect(item1) |> toEqual(item2)

|

||||

// )

|

||||

// : test(str, () =>

|

||||

// expect(item1) |> toEqual(item2)

|

||||

// );

|

||||

|

||||

// let makeTestCloseEquality = (~only=false, str, item1, item2, ~digits) =>

|

||||

// only

|

||||

// ? Only.test(str, () =>

|

||||

// expect(item1) |> toBeSoCloseTo(item2, ~digits)

|

||||

// )

|

||||

// : test(str, () =>

|

||||

// expect(item1) |> toBeSoCloseTo(item2, ~digits)

|

||||

// );

|

||||

|

||||

// describe("Shape", () => {

|

||||

// describe("Continuous", () => {

|

||||

// open Continuous;

|

||||

// let continuous = make(`Linear, shape, None);

|

||||

// makeTest("minX", T.minX(continuous), 1.0);

|

||||

// makeTest("maxX", T.maxX(continuous), 8.0);

|

||||

// makeTest(

|

||||

// "mapY",

|

||||

// T.mapY(r => r *. 2.0, continuous) |> getShape |> (r => r.ys),

|

||||

// [|16., 18.0, 4.0|],

|

||||

// );

|

||||

// describe("xToY", () => {

|

||||

// describe("when Linear", () => {

|

||||

// makeTest(

|

||||

// "at 4.0",

|

||||

// T.xToY(4., continuous),

|

||||

// {continuous: 9.0, discrete: 0.0},

|

||||

// );

|

||||

// // Note: This below is weird to me, I'm not sure if it's what we want really.

|

||||

// makeTest(

|

||||

// "at 0.0",

|

||||

// T.xToY(0., continuous),

|

||||

// {continuous: 8.0, discrete: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "at 5.0",

|

||||

// T.xToY(5., continuous),

|

||||

// {continuous: 7.25, discrete: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "at 10.0",

|

||||

// T.xToY(10., continuous),

|

||||

// {continuous: 2.0, discrete: 0.0},

|

||||

// );

|

||||

// });

|

||||

// describe("when Stepwise", () => {

|

||||

// let continuous = make(`Stepwise, shape, None);

|

||||

// makeTest(

|

||||

// "at 4.0",

|

||||

// T.xToY(4., continuous),

|

||||

// {continuous: 9.0, discrete: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "at 0.0",

|

||||

// T.xToY(0., continuous),

|

||||

// {continuous: 0.0, discrete: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "at 5.0",

|

||||

// T.xToY(5., continuous),

|

||||

// {continuous: 9.0, discrete: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "at 10.0",

|

||||

// T.xToY(10., continuous),

|

||||

// {continuous: 2.0, discrete: 0.0},

|

||||

// );

|

||||

// });

|

||||

// });

|

||||

// makeTest(

|

||||

// "integral",

|

||||

// T.Integral.get(~cache=None, continuous) |> getShape,

|

||||

// {xs: [|1.0, 4.0, 8.0|], ys: [|0.0, 25.5, 47.5|]},

|

||||

// );

|

||||

// makeTest(

|

||||

// "toLinear",

|

||||

// {

|

||||

// let continuous =

|

||||

// make(`Stepwise, {xs: [|1., 4., 8.|], ys: [|0.1, 5., 1.0|]}, None);

|

||||

// continuous |> toLinear |> E.O.fmap(getShape);

|

||||

// },

|

||||

// Some({

|

||||

// xs: [|1.00007, 1.00007, 4.0, 4.00007, 8.0, 8.00007|],

|

||||

// ys: [|0.0, 0.1, 0.1, 5.0, 5.0, 1.0|],

|

||||

// }),

|

||||

// );

|

||||

// makeTest(

|

||||

// "toLinear",

|

||||

// {

|

||||

// let continuous = make(`Stepwise, {xs: [|0.0|], ys: [|0.3|]}, None);

|

||||

// continuous |> toLinear |> E.O.fmap(getShape);

|

||||

// },

|

||||

// Some({xs: [|0.0|], ys: [|0.3|]}),

|

||||

// );

|

||||

// makeTest(

|

||||

// "integralXToY",

|

||||

// T.Integral.xToY(~cache=None, 0.0, continuous),

|

||||

// 0.0,

|

||||

// );

|

||||

// makeTest(

|

||||

// "integralXToY",

|

||||

// T.Integral.xToY(~cache=None, 2.0, continuous),

|

||||

// 8.5,

|

||||

// );

|

||||

// makeTest(

|

||||

// "integralXToY",

|

||||

// T.Integral.xToY(~cache=None, 100.0, continuous),

|

||||

// 47.5,

|

||||

// );

|

||||

// makeTest(

|

||||

// "integralEndY",

|

||||

// continuous

|

||||

// |> T.normalize //scaleToIntegralSum(~intendedSum=1.0)

|

||||

// |> T.Integral.sum(~cache=None),

|

||||

// 1.0,

|

||||

// );

|

||||

// });

|

||||

|

||||

// describe("Discrete", () => {

|

||||

// open Discrete;

|

||||

// let shape: DistTypes.xyShape = {

|

||||

// xs: [|1., 4., 8.|],

|

||||

// ys: [|0.3, 0.5, 0.2|],

|

||||

// };

|

||||

// let discrete = make(shape, None);

|

||||

// makeTest("minX", T.minX(discrete), 1.0);

|

||||

// makeTest("maxX", T.maxX(discrete), 8.0);

|

||||

// makeTest(

|

||||

// "mapY",

|

||||

// T.mapY(r => r *. 2.0, discrete) |> (r => getShape(r).ys),

|

||||

// [|0.6, 1.0, 0.4|],

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 4.0",

|

||||

// T.xToY(4., discrete),

|

||||

// {discrete: 0.5, continuous: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 0.0",

|

||||

// T.xToY(0., discrete),

|

||||

// {discrete: 0.0, continuous: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 5.0",

|

||||

// T.xToY(5., discrete),

|

||||

// {discrete: 0.0, continuous: 0.0},

|

||||

// );

|

||||

// makeTest(

|

||||

// "scaleBy",

|

||||

// scaleBy(~scale=4.0, discrete),

|

||||

// make({xs: [|1., 4., 8.|], ys: [|1.2, 2.0, 0.8|]}, None),

|

||||

// );

|

||||

// makeTest(

|

||||

// "normalize, then scale by 4.0",

|

||||

// discrete

|

||||

// |> T.normalize

|

||||

// |> scaleBy(~scale=4.0),

|

||||

// make({xs: [|1., 4., 8.|], ys: [|1.2, 2.0, 0.8|]}, None),

|

||||

// );

|

||||

// makeTest(

|

||||

// "scaleToIntegralSum: back and forth",

|

||||

// discrete

|

||||

// |> T.normalize

|

||||

// |> scaleBy(~scale=4.0)

|

||||

// |> T.normalize,

|

||||

// discrete,

|

||||

// );

|

||||

// makeTest(

|

||||

// "integral",

|

||||

// T.Integral.get(~cache=None, discrete),

|

||||

// Continuous.make(

|

||||

// `Stepwise,

|

||||

// {xs: [|1., 4., 8.|], ys: [|0.3, 0.8, 1.0|]},

|

||||

// None

|

||||

// ),

|

||||

// );

|

||||

// makeTest(

|

||||

// "integral with 1 element",

|

||||

// T.Integral.get(~cache=None, Discrete.make({xs: [|0.0|], ys: [|1.0|]}, None)),

|

||||

// Continuous.make(`Stepwise, {xs: [|0.0|], ys: [|1.0|]}, None),

|

||||

// );

|

||||

// makeTest(

|

||||

// "integralXToY",

|

||||

// T.Integral.xToY(~cache=None, 6.0, discrete),

|

||||

// 0.9,

|

||||

// );

|

||||

// makeTest("integralEndY", T.Integral.sum(~cache=None, discrete), 1.0);

|

||||

// makeTest("mean", T.mean(discrete), 3.9);

|

||||

// makeTestCloseEquality(

|

||||

// "variance",

|

||||

// T.variance(discrete),

|

||||

// 5.89,

|

||||

// ~digits=7,

|

||||

// );

|

||||

// });

|

||||

|

||||

// describe("Mixed", () => {

|

||||

// open Distributions.Mixed;

|

||||

// let discreteShape: DistTypes.xyShape = {

|

||||

// xs: [|1., 4., 8.|],

|

||||

// ys: [|0.3, 0.5, 0.2|],

|

||||

// };

|

||||

// let discrete = Discrete.make(discreteShape, None);

|

||||

// let continuous =

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {xs: [|3., 7., 14.|], ys: [|0.058, 0.082, 0.124|]},

|

||||

// None

|

||||

// )

|

||||

// |> Continuous.T.normalize; //scaleToIntegralSum(~intendedSum=1.0);

|

||||

// let mixed = Mixed.make(

|

||||

// ~continuous,

|

||||

// ~discrete,

|

||||

// );

|

||||

// makeTest("minX", T.minX(mixed), 1.0);

|

||||

// makeTest("maxX", T.maxX(mixed), 14.0);

|

||||

// makeTest(

|

||||

// "mapY",

|

||||

// T.mapY(r => r *. 2.0, mixed),

|

||||

// Mixed.make(

|

||||

// ~continuous=

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {

|

||||

// xs: [|3., 7., 14.|],

|

||||

// ys: [|

|

||||

// 0.11588411588411589,

|

||||

// 0.16383616383616384,

|

||||

// 0.24775224775224775,

|

||||

// |],

|

||||

// },

|

||||

// None

|

||||

// ),

|

||||

// ~discrete=Discrete.make({xs: [|1., 4., 8.|], ys: [|0.6, 1.0, 0.4|]}, None)

|

||||

// ),

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 4.0",

|

||||

// T.xToY(4., mixed),

|

||||

// {discrete: 0.25, continuous: 0.03196803196803197},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 0.0",

|

||||

// T.xToY(0., mixed),

|

||||

// {discrete: 0.0, continuous: 0.028971028971028972},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 5.0",

|

||||

// T.xToY(7., mixed),

|

||||

// {discrete: 0.0, continuous: 0.04095904095904096},

|

||||

// );

|

||||

// makeTest("integralEndY", T.Integral.sum(~cache=None, mixed), 1.0);

|

||||

// makeTest(

|

||||

// "scaleBy",

|

||||

// Mixed.scaleBy(~scale=2.0, mixed),

|

||||

// Mixed.make(

|

||||

// ~continuous=

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {

|

||||

// xs: [|3., 7., 14.|],

|

||||

// ys: [|

|

||||

// 0.11588411588411589,

|

||||

// 0.16383616383616384,

|

||||

// 0.24775224775224775,

|

||||

// |],

|

||||

// },

|

||||

// None

|

||||

// ),

|

||||

// ~discrete=Discrete.make({xs: [|1., 4., 8.|], ys: [|0.6, 1.0, 0.4|]}, None),

|

||||

// ),

|

||||

// );

|

||||

// makeTest(

|

||||

// "integral",

|

||||

// T.Integral.get(~cache=None, mixed),

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {

|

||||

// xs: [|1.00007, 1.00007, 3., 4., 4.00007, 7., 8., 8.00007, 14.|],

|

||||

// ys: [|

|

||||

// 0.0,

|

||||

// 0.0,

|

||||

// 0.15,

|

||||

// 0.18496503496503497,

|

||||

// 0.4349674825174825,

|

||||

// 0.5398601398601399,

|

||||

// 0.5913086913086913,

|

||||

// 0.6913122927072927,

|

||||

// 1.0,

|

||||

// |],

|

||||

// },

|

||||

// None,

|

||||

// ),

|

||||

// );

|

||||

// });

|

||||

|

||||

// describe("Distplus", () => {

|

||||

// open DistPlus;

|

||||

// let discreteShape: DistTypes.xyShape = {

|

||||

// xs: [|1., 4., 8.|],

|

||||

// ys: [|0.3, 0.5, 0.2|],

|

||||

// };

|

||||

// let discrete = Discrete.make(discreteShape, None);

|

||||

// let continuous =

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {xs: [|3., 7., 14.|], ys: [|0.058, 0.082, 0.124|]},

|

||||

// None

|

||||

// )

|

||||

// |> Continuous.T.normalize; //scaleToIntegralSum(~intendedSum=1.0);

|

||||

// let mixed =

|

||||

// Mixed.make(

|

||||

// ~continuous,

|

||||

// ~discrete,

|

||||

// );

|

||||

// let distPlus =

|

||||

// DistPlus.make(

|

||||

// ~shape=Mixed(mixed),

|

||||

// ~squiggleString=None,

|

||||

// (),

|

||||

// );

|

||||

// makeTest("minX", T.minX(distPlus), 1.0);

|

||||

// makeTest("maxX", T.maxX(distPlus), 14.0);

|

||||

// makeTest(

|

||||

// "xToY at 4.0",

|

||||

// T.xToY(4., distPlus),

|

||||

// {discrete: 0.25, continuous: 0.03196803196803197},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 0.0",

|

||||

// T.xToY(0., distPlus),

|

||||

// {discrete: 0.0, continuous: 0.028971028971028972},

|

||||

// );

|

||||

// makeTest(

|

||||

// "xToY at 5.0",

|

||||

// T.xToY(7., distPlus),

|

||||

// {discrete: 0.0, continuous: 0.04095904095904096},

|

||||

// );

|

||||

// makeTest("integralEndY", T.Integral.sum(~cache=None, distPlus), 1.0);

|

||||

// makeTest(

|

||||

// "integral",

|

||||

// T.Integral.get(~cache=None, distPlus) |> T.toContinuous,

|

||||

// Some(

|

||||

// Continuous.make(

|

||||

// `Linear,

|

||||

// {

|

||||

// xs: [|1.00007, 1.00007, 3., 4., 4.00007, 7., 8., 8.00007, 14.|],

|

||||

// ys: [|

|

||||

// 0.0,

|

||||

// 0.0,

|

||||

// 0.15,

|

||||

// 0.18496503496503497,

|

||||

// 0.4349674825174825,

|

||||

// 0.5398601398601399,

|

||||

// 0.5913086913086913,

|

||||

// 0.6913122927072927,

|

||||

// 1.0,

|

||||

// |],

|

||||

// },

|

||||

// None,

|

||||

// ),

|

||||

// ),

|

||||

// );

|

||||

// });

|

||||

|

||||

// describe("Shape", () => {

|

||||

// let mean = 10.0;

|

||||

// let stdev = 4.0;

|

||||

// let variance = stdev ** 2.0;

|

||||

// let numSamples = 10000;

|

||||

// open Distributions.Shape;

|

||||

// let normal: SymbolicTypes.symbolicDist = `Normal({mean, stdev});

|

||||

// let normalShape = ExpressionTree.toShape(numSamples, `SymbolicDist(normal));

|

||||

// let lognormal = SymbolicDist.Lognormal.fromMeanAndStdev(mean, stdev);

|

||||

// let lognormalShape = ExpressionTree.toShape(numSamples, `SymbolicDist(lognormal));

|

||||

|

||||

// makeTestCloseEquality(

|

||||

// "Mean of a normal",

|

||||

// T.mean(normalShape),

|

||||

// mean,

|

||||

// ~digits=2,

|

||||

// );

|

||||

// makeTestCloseEquality(

|

||||

// "Variance of a normal",

|

||||

// T.variance(normalShape),

|

||||

// variance,

|

||||

// ~digits=1,

|

||||

// );

|

||||

// makeTestCloseEquality(

|

||||

// "Mean of a lognormal",

|

||||

// T.mean(lognormalShape),

|

||||

// mean,

|

||||

// ~digits=2,

|

||||

// );

|

||||

// makeTestCloseEquality(

|

||||

// "Variance of a lognormal",

|

||||

// T.variance(lognormalShape),

|

||||

// variance,

|

||||

// ~digits=0,

|

||||

// );

|

||||

// });

|

||||

// });

|

||||

|

|

@ -1,57 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

let makeTest = (~only=false, str, item1, item2) =>

|

||||

only

|

||||

? Only.test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

)

|

||||

: test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

);

|

||||

|

||||

let evalParams: ExpressionTypes.ExpressionTree.evaluationParams = {

|

||||

samplingInputs: {

|

||||

sampleCount: 1000,

|

||||

outputXYPoints: 10000,

|

||||

kernelWidth: None,

|

||||

shapeLength: 1000,

|

||||

},

|

||||

environment:

|

||||

[|

|

||||

("K", `SymbolicDist(`Float(1000.0))),

|

||||

("M", `SymbolicDist(`Float(1000000.0))),

|

||||

("B", `SymbolicDist(`Float(1000000000.0))),

|

||||

("T", `SymbolicDist(`Float(1000000000000.0))),

|

||||

|]

|

||||

->Belt.Map.String.fromArray,

|

||||

evaluateNode: ExpressionTreeEvaluator.toLeaf,

|

||||

};

|

||||

|

||||

let shape1: DistTypes.xyShape = {xs: [|1., 4., 8.|], ys: [|0.2, 0.4, 0.8|]};

|

||||

|

||||

describe("XYShapes", () => {

|

||||

describe("logScorePoint", () => {

|

||||

makeTest(

|

||||

"When identical",

|

||||

{

|

||||

let foo =

|

||||

HardcodedFunctions.(

|

||||

makeRenderedDistFloat("scaleMultiply", (dist, float) =>

|

||||

verticalScaling(`Multiply, dist, float)

|

||||

)

|

||||

);

|

||||

|

||||

TypeSystem.Function.T.run(

|

||||

evalParams,

|

||||

[|

|

||||

`SymbolicDist(`Float(100.0)),

|

||||

`SymbolicDist(`Float(1.0)),

|

||||

|],

|

||||

foo,

|

||||

);

|

||||

},

|

||||

Error("Sad"),

|

||||

)

|

||||

})

|

||||

});

|

||||

|

|

@ -1,24 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

let makeTest = (~only=false, str, item1, item2) =>

|

||||

only

|

||||

? Only.test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

)

|

||||

: test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

);

|

||||

|

||||

describe("Lodash", () => {

|

||||

describe("Lodash", () => {

|

||||

makeTest("min", Lodash.min([|1, 3, 4|]), 1);

|

||||

makeTest("max", Lodash.max([|1, 3, 4|]), 4);

|

||||

makeTest("uniq", Lodash.uniq([|1, 3, 4, 4|]), [|1, 3, 4|]);

|

||||

makeTest(

|

||||

"countBy",

|

||||

Lodash.countBy([|1, 3, 4, 4|], r => r),

|

||||

Js.Dict.fromArray([|("1", 1), ("3", 1), ("4", 2)|]),

|

||||

);

|

||||

})

|

||||

});

|

||||

|

|

@ -1,51 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

let makeTest = (~only=false, str, item1, item2) =>

|

||||

only

|

||||

? Only.test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

)

|

||||

: test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

);

|

||||

|

||||

describe("Lodash", () => {

|

||||

describe("Lodash", () => {

|

||||

makeTest(

|

||||

"split",

|

||||

SamplesToShape.Internals.T.splitContinuousAndDiscrete([|1.432, 1.33455, 2.0|]),

|

||||

([|1.432, 1.33455, 2.0|], E.FloatFloatMap.empty()),

|

||||

);

|

||||

makeTest(

|

||||

"split",

|

||||

SamplesToShape.Internals.T.splitContinuousAndDiscrete([|

|

||||

1.432,

|

||||

1.33455,

|

||||

2.0,

|

||||

2.0,

|

||||

2.0,

|

||||

2.0,

|

||||

|])

|

||||

|> (((c, disc)) => (c, disc |> E.FloatFloatMap.toArray)),

|

||||

([|1.432, 1.33455|], [|(2.0, 4.0)|]),

|

||||

);

|

||||

|

||||

let makeDuplicatedArray = count => {

|

||||

let arr = Belt.Array.range(1, count) |> E.A.fmap(float_of_int);

|

||||

let sorted = arr |> Belt.SortArray.stableSortBy(_, compare);

|

||||

E.A.concatMany([|sorted, sorted, sorted, sorted|])

|

||||

|> Belt.SortArray.stableSortBy(_, compare);

|

||||

};

|

||||

|

||||

let (_, discrete) =

|

||||

SamplesToShape.Internals.T.splitContinuousAndDiscrete(makeDuplicatedArray(10));

|

||||

let toArr = discrete |> E.FloatFloatMap.toArray;

|

||||

makeTest("splitMedium", toArr |> Belt.Array.length, 10);

|

||||

|

||||

let (c, discrete) =

|

||||

SamplesToShape.Internals.T.splitContinuousAndDiscrete(makeDuplicatedArray(500));

|

||||

let toArr = discrete |> E.FloatFloatMap.toArray;

|

||||

makeTest("splitMedium", toArr |> Belt.Array.length, 500);

|

||||

})

|

||||

});

|

||||

|

|

@ -1,63 +0,0 @@

|

|||

open Jest;

|

||||

open Expect;

|

||||

|

||||

let makeTest = (~only=false, str, item1, item2) =>

|

||||

only

|

||||

? Only.test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

)

|

||||

: test(str, () =>

|

||||

expect(item1) |> toEqual(item2)

|

||||

);

|

||||

|

||||

let shape1: DistTypes.xyShape = {xs: [|1., 4., 8.|], ys: [|0.2, 0.4, 0.8|]};

|

||||

|

||||

let shape2: DistTypes.xyShape = {

|

||||

xs: [|1., 5., 10.|],

|

||||

ys: [|0.2, 0.5, 0.8|],

|

||||

};

|

||||

|

||||

let shape3: DistTypes.xyShape = {

|

||||

xs: [|1., 20., 50.|],

|

||||

ys: [|0.2, 0.5, 0.8|],

|

||||

};

|

||||

|

||||

describe("XYShapes", () => {

|

||||

describe("logScorePoint", () => {

|

||||

makeTest(

|

||||

"When identical",

|

||||

XYShape.logScorePoint(30, shape1, shape1),

|

||||

Some(0.0),

|

||||

);

|

||||

makeTest(

|

||||

"When similar",

|

||||

XYShape.logScorePoint(30, shape1, shape2),

|

||||

Some(1.658971191043856),

|

||||

);

|

||||

makeTest(

|

||||

"When very different",

|

||||

XYShape.logScorePoint(30, shape1, shape3),

|

||||

Some(210.3721280423322),

|

||||

);

|

||||

});

|

||||

// describe("transverse", () => {

|

||||

// makeTest(

|

||||

// "When very different",

|

||||

// XYShape.Transversal._transverse(

|

||||

// (aCurrent, aLast) => aCurrent +. aLast,

|

||||

// [|1.0, 2.0, 3.0, 4.0|],

|

||||

// ),

|

||||

// [|1.0, 3.0, 6.0, 10.0|],

|

||||

// )

|

||||

// });

|

||||

describe("integrateWithTriangles", () => {

|

||||

makeTest(

|

||||

"integrates correctly",

|

||||

XYShape.Range.integrateWithTriangles(shape1),

|

||||

Some({

|

||||

xs: [|1., 4., 8.|],

|

||||

ys: [|0.0, 0.9000000000000001, 3.3000000000000007|],

|

||||

}),

|

||||

)

|

||||

});

|

||||

});

|

||||

86

package.json

|

|

@ -1,77 +1,21 @@

|

|||

{

|

||||

"name": "estiband",

|

||||

"version": "0.1.0",

|

||||

"homepage": "https://foretold-app.github.io/estiband/",

|

||||

"private": true,

|

||||

"name": "squiggle",

|

||||

"scripts": {

|

||||

"build": "bsb -make-world",

|

||||

"build:style": "tailwind build src/styles/index.css -o src/styles/tailwind.css",

|

||||

"start": "bsb -make-world -w -ws _ ",

|

||||

"clean": "bsb -clean-world",

|

||||

"parcel": "parcel ./src/index.html --public-url / --no-autoinstall -- watch",

|

||||

"parcel-build": "parcel build ./src/index.html --no-source-maps --no-autoinstall",

|

||||

"showcase": "PORT=12345 parcel showcase/index.html",

|

||||

"server": "moduleserve ./ --port 8000",

|

||||

"predeploy": "parcel build ./src/index.html --no-source-maps --no-autoinstall",

|

||||

"deploy": "gh-pages -d dist",

|

||||

"test": "jest",

|

||||

"test:ci": "yarn jest",

|

||||

"watch:test": "jest --watchAll",

|

||||

"watch:s": "yarn jest -- Converter_test --watch"

|

||||

"build:lang": "cd packages/squiggle-lang && yarn && yarn build && yarn package",

|

||||

"storybook:components": "cd packages/components && yarn && yarn storybook",

|

||||

"build-storybook:components": "cd packages/components && yarn && yarn build-storybook",

|

||||

"build:components": "cd packages/components && yarn && yarn package",

|

||||

"build:playground": "cd packages/playground && yarn && yarn parcel-build",

|

||||

"ci:lang": "yarn workspace @squiggle/lang ci",

|

||||

"ci:components": "yarn ci:lang && yarn workspace @squiggle/components ci",

|

||||

"ci:playground": "yarn ci:components && yarn workspace @squiggle/playground ci"

|

||||

},

|

||||

"keywords": [

|

||||

"BuckleScript",

|

||||

"ReasonReact",

|

||||

"reason-react"

|

||||

"workspaces": [

|

||||

"packages/*"

|

||||

],

|

||||

"author": "",

|

||||

"license": "MIT",

|

||||

"dependencies": {

|

||||

"@foretold/components": "0.0.6",

|

||||

"@glennsl/bs-json": "^5.0.2",

|

||||

"ace-builds": "^1.4.12",

|

||||

"antd": "3.17.0",

|

||||

"autoprefixer": "9.7.4",

|

||||

"babel-plugin-transform-es2015-modules-commonjs": "^6.26.2",

|

||||

"binary-search-tree": "0.2.6",

|

||||

"bs-ant-design-alt": "2.0.0-alpha.33",

|

||||

"bs-css": "11.0.0",

|

||||

"bs-moment": "0.4.5",

|

||||

"bs-reform": "9.7.1",

|

||||

"bsb-js": "1.1.7",

|

||||

"d3": "5.15.0",

|

||||

"gh-pages": "2.2.0",

|

||||

"jest": "^25.5.1",

|

||||

"jstat": "1.9.2",

|

||||

"lenses-ppx": "5.1.0",

|

||||

"less": "3.10.3",

|

||||

"lodash": "4.17.15",

|

||||

"mathjs": "5.10.3",

|

||||

"moduleserve": "0.9.1",

|

||||

"moment": "2.24.0",

|

||||

"pdfast": "^0.2.0",

|

||||

"postcss-cli": "7.1.0",

|

||||

"rationale": "0.2.0",

|

||||

"react": "^16.10.0",

|

||||

"react-ace": "^9.2.0",

|

||||

"react-dom": "^0.13.0 || ^0.14.0 || ^15.0.1 || ^16.0.0",

|

||||

"react-use": "^13.27.0",

|

||||

"react-vega": "^7.4.1",

|

||||

"reason-react": ">=0.7.0",

|

||||

"reschema": "1.3.0",

|

||||

"tailwindcss": "1.2.0",

|

||||

"vega": "*",

|

||||

"vega-embed": "6.6.0",

|

||||

"vega-lite": "*"

|

||||

"resolutions": {

|

||||

"@types/react": "17.0.39"

|

||||

},

|

||||

"devDependencies": {

|

||||

"@glennsl/bs-jest": "^0.5.1",

|

||||

"bs-platform": "7.3.2",

|

||||

"parcel-bundler": "1.12.4",

|

||||

"parcel-plugin-bundle-visualiser": "^1.2.0",

|

||||

"parcel-plugin-less-js-enabled": "1.0.2"

|

||||

},

|

||||

"alias": {

|

||||

"react": "./node_modules/react",

|

||||

"react-dom": "./node_modules/react-dom"

|

||||

}

|

||||

"packageManager": "yarn@1.22.17"

|

||||

}

|

||||

|

|

|

|||

25

packages/components/.gitignore

vendored

Normal file

|

|

@ -0,0 +1,25 @@

|

|||

# See https://help.github.com/articles/ignoring-files/ for more about ignoring files.

|

||||

|

||||

# dependencies

|

||||

/node_modules

|

||||

/.pnp

|

||||

.pnp.js

|

||||

|

||||

# testing

|

||||

/coverage

|

||||

|

||||

# production

|

||||

/build

|

||||

|

||||

# misc

|

||||

.DS_Store

|

||||

.env.local

|

||||

.env.development.local

|

||||

.env.test.local

|

||||

.env.production.local

|

||||

|

||||

npm-debug.log*

|

||||

yarn-debug.log*

|

||||

yarn-error.log*

|

||||

storybook-static

|

||||

dist

|

||||

35

packages/components/.storybook/main.js

Normal file

|

|

@ -0,0 +1,35 @@

|

|||

//const TsconfigPathsPlugin = require('tsconfig-paths-webpack-plugin');

|

||||

|

||||

module.exports = {

|

||||

/* webpackFinal: async (config) => {

|

||||

config.resolve.plugins = [

|

||||

...(config.resolve.plugins || []),

|

||||

new TsconfigPathsPlugin({

|

||||

extensions: config.resolve.extensions,

|

||||

}),

|

||||

];

|

||||

return config;

|

||||

},*/

|

||||

"stories": [

|

||||

"../src/**/*.stories.mdx",

|

||||

"../src/**/*.stories.@(js|jsx|ts|tsx)"

|

||||

],

|

||||

"addons": [

|

||||

"@storybook/addon-links",

|

||||

"@storybook/addon-essentials",

|

||||

"@storybook/preset-create-react-app"

|

||||

],

|

||||

"framework": "@storybook/react",

|

||||

"core": {

|

||||

"builder": "webpack5"

|

||||

},

|

||||

typescript: {

|

||||

check: false,

|

||||

checkOptions: {},

|

||||

reactDocgen: 'react-docgen-typescript',

|

||||

reactDocgenTypescriptOptions: {

|

||||

shouldExtractLiteralValuesFromEnum: true,

|

||||

propFilter: (prop) => (prop.parent ? !/node_modules/.test(prop.parent.fileName) : true),

|

||||

},

|

||||

},

|

||||

}

|

||||

9

packages/components/.storybook/preview.js

Normal file

|

|

@ -0,0 +1,9 @@

|

|||

export const parameters = {

|

||||

actions: { argTypesRegex: "^on[A-Z].*" },

|

||||

controls: {

|

||||

matchers: {

|

||||

color: /(background|color)$/i,

|

||||

date: /Date$/,

|

||||

},

|

||||

},

|

||||

}

|

||||

6

packages/components/README.md

Normal file

|

|

@ -0,0 +1,6 @@

|

|||

# Squiggle Components

|

||||

|

||||

This package contains all the components for squiggle. These can be used either

|

||||

as a library or hosted as a [storybook](https://storybook.js.org/).

|

||||

|

||||

To run the storybook, run `yarn` then `yarn storybook`.

|

||||

57037

packages/components/package-lock.json

generated

Normal file

79

packages/components/package.json

Normal file

|

|

@ -0,0 +1,79 @@

|

|||

{

|

||||

"name": "@squiggle/components",

|

||||

"version": "0.1.0",

|

||||

"private": true,

|

||||

"dependencies": {

|

||||

"@squiggle/lang": "0.1.9",

|

||||

"@testing-library/jest-dom": "^5.16.2",

|

||||

"@testing-library/react": "^12.1.2",

|

||||

"@testing-library/user-event": "^13.5.0",

|

||||

"@types/jest": "^27.4.0",

|

||||

"@types/lodash": "^4.14.178",

|

||||

"@types/node": "^17.0.16",

|

||||

"@types/react": "^17.0.39",

|

||||

"@types/react-dom": "^17.0.11",

|

||||

"cross-env": "^7.0.3",

|

||||

"lodash": "^4.17.21",

|

||||

"react": "^17.0.2",

|

||||

"react-dom": "^17.0.2",

|

||||

"react-scripts": "5.0.0",

|

||||

"react-vega": "^7.4.4",

|

||||

"tsconfig-paths-webpack-plugin": "^3.5.2",

|

||||

"typescript": "^4.5.5",

|

||||

"vega": "^5.21.0",

|

||||

"vega-embed": "^6.20.6",

|

||||

"vega-lite": "^5.2.0",

|

||||

"web-vitals": "^2.1.4",

|

||||

"webpack-cli": "^4.9.2"

|

||||

},

|

||||

"scripts": {

|

||||

"storybook": "cross-env REACT_APP_FAST_REFRESH=false && start-storybook -p 6006 -s public",

|

||||

"build-storybook": "build-storybook -s public",

|

||||

"package": "tsc",

|

||||

"ci": "yarn package"

|

||||

},

|

||||

"eslintConfig": {

|

||||

"extends": [

|

||||

"react-app",

|

||||

"react-app/jest"

|

||||

],

|

||||

"overrides": [

|

||||

{

|

||||

"files": [

|

||||

"**/*.stories.*"

|

||||

],

|

||||

"rules": {

|

||||

"import/no-anonymous-default-export": "off"

|

||||

}

|

||||

}

|

||||

]

|

||||

},

|

||||

"browserslist": {

|

||||

"production": [

|

||||

">0.2%",

|

||||

"not dead",

|

||||

"not op_mini all"

|

||||

],

|

||||

"development": [

|

||||

"last 1 chrome version",

|

||||

"last 1 firefox version",

|

||||

"last 1 safari version"

|

||||

]

|

||||

},

|

||||

"devDependencies": {

|

||||

"@storybook/addon-actions": "^6.4.18",

|

||||

"@storybook/addon-essentials": "^6.4.18",

|

||||

"@storybook/addon-links": "^6.4.18",

|

||||

"@storybook/builder-webpack5": "^6.4.18",

|

||||

"@storybook/manager-webpack5": "^6.4.18",

|

||||

"@storybook/node-logger": "^6.4.18",

|

||||

"@storybook/preset-create-react-app": "^4.0.0",

|

||||

"@storybook/react": "^6.4.18",

|

||||

"webpack": "^5.68.0"

|

||||

},

|

||||

"resolutions": {

|

||||

"@types/react": "17.0.39"

|

||||

},

|

||||

"main": "dist/index.js",

|

||||

"types": "dist/index.d.ts"

|

||||

}

|

||||

BIN

packages/components/public/favicon.ico

Normal file

|

After Width: | Height: | Size: 13 KiB |

19

packages/components/public/index.html

Normal file

|

|

@ -0,0 +1,19 @@

|

|||

<!DOCTYPE html>

|

||||

<html lang="en">

|

||||

<head>

|

||||

<meta charset="utf-8" />

|

||||

<link rel="icon" href="%PUBLIC_URL%/favicon.ico" />

|

||||

<meta name="viewport" content="width=device-width, initial-scale=1" />

|

||||

<meta name="theme-color" content="#000000" />

|

||||

<meta

|

||||

name="description"

|

||||

content="Squiggle components"

|

||||

/>

|

||||

<link rel="apple-touch-icon" href="%PUBLIC_URL%/logo192.png" />

|

||||

<title>Squiggle Components</title>

|

||||

</head>

|

||||

<body>

|

||||

<noscript>You need to enable JavaScript to run this app.</noscript>

|

||||

<div id="root"></div>

|

||||

</body>

|

||||

</html>

|

||||

BIN

packages/components/public/logo16.png

Normal file

|

After Width: | Height: | Size: 327 B |

BIN

packages/components/public/logo192.png

Normal file

|

After Width: | Height: | Size: 7.4 KiB |

BIN

packages/components/public/logo32.png

Normal file

|

After Width: | Height: | Size: 697 B |

BIN

packages/components/public/logo42.png

Normal file

|

After Width: | Height: | Size: 1.0 KiB |

BIN

packages/components/public/logo512.png

Normal file

|

After Width: | Height: | Size: 31 KiB |

25

packages/components/public/manifest.json

Normal file

|

|

@ -0,0 +1,25 @@

|

|||

{

|

||||

"short_name": "React App",

|

||||

"name": "Create React App Sample",

|

||||

"icons": [

|

||||

{

|

||||

"src": "favicon.ico",

|

||||

"sizes": "64x64 32x32 24x24 16x16",

|

||||

"type": "image/x-icon"

|

||||

},

|

||||

{

|

||||

"src": "logo192.png",

|

||||

"type": "image/png",

|

||||

"sizes": "192x192"

|

||||

},

|

||||

{

|

||||

"src": "logo512.png",

|

||||

"type": "image/png",

|

||||

"sizes": "512x512"

|

||||

}

|

||||

],

|

||||

"start_url": ".",

|

||||

"display": "standalone",

|

||||

"theme_color": "#000000",

|

||||

"background_color": "#ffffff"

|

||||

}

|

||||

3

packages/components/public/robots.txt

Normal file

|

|

@ -0,0 +1,3 @@

|

|||

# https://www.robotstxt.org/robotstxt.html

|

||||

User-agent: *

|

||||

Disallow:

|

||||

676

packages/components/public/squiggle.svg

Normal file

|

After Width: | Height: | Size: 107 KiB |

5

packages/components/shell.nix

Normal file

|

|

@ -0,0 +1,5 @@

|

|||

{ pkgs ? import <nixpkgs> {} }:

|

||||

pkgs.mkShell {

|

||||

name = "squiggle-components";

|

||||

buildInputs = with pkgs; [ nodePackages.yarn nodejs ];

|

||||

}

|

||||

1

packages/components/src/SquiggleChart.js.map

Normal file

355

packages/components/src/SquiggleChart.tsx

Normal file

|

|

@ -0,0 +1,355 @@

|

|||

import * as React from 'react';

|

||||

import * as _ from 'lodash';

|

||||

import type { Spec } from 'vega';

|

||||

import { run } from '@squiggle/lang';

|

||||

import type { DistPlus, SamplingInputs } from '@squiggle/lang';

|

||||

import { createClassFromSpec } from 'react-vega';

|

||||

import * as chartSpecification from './spec-distributions.json'

|

||||

import * as percentilesSpec from './spec-pertentiles.json'

|

||||

|

||||

let SquiggleVegaChart = createClassFromSpec({'spec': chartSpecification as Spec});

|

||||

|

||||

let SquigglePercentilesChart = createClassFromSpec({'spec': percentilesSpec as Spec});

|

||||

|

||||

export interface SquiggleChartProps {

|

||||

/** The input string for squiggle */

|

||||

squiggleString : string,

|

||||

|

||||

/** If the output requires monte carlo sampling, the amount of samples */

|

||||

sampleCount? : number,

|

||||

/** The amount of points returned to draw the distribution */

|

||||

outputXYPoints? : number,

|

||||

kernelWidth? : number,

|

||||

pointDistLength? : number,

|

||||

/** If the result is a function, where the function starts */

|

||||

diagramStart? : number,

|

||||

/** If the result is a function, where the function ends */

|

||||

diagramStop? : number,

|

||||

/** If the result is a function, how many points along the function it samples */

|

||||

diagramCount? : number

|

||||

}

|

||||

|

||||

export const SquiggleChart : React.FC<SquiggleChartProps> = props => {

|

||||

let samplingInputs : SamplingInputs = {

|

||||

sampleCount : props.sampleCount,

|

||||

outputXYPoints : props.outputXYPoints,

|

||||

kernelWidth : props.kernelWidth,

|

||||

pointDistLength : props.pointDistLength

|

||||

}

|

||||

|

||||

|

||||

let result = run(props.squiggleString, samplingInputs);

|

||||

console.log(result)

|

||||

if (result.tag === "Ok") {

|

||||

let chartResults = result.value.map(chartResult => {

|

||||

console.log(chartResult)

|

||||

if(chartResult["NAME"] === "Float"){

|

||||

return <MakeNumberShower precision={3} number={chartResult["VAL"]} />;

|

||||

}

|

||||

else if(chartResult["NAME"] === "DistPlus"){

|

||||

let shape = chartResult.VAL.pointSetDist;

|

||||

if(shape.tag === "Continuous"){

|

||||

let xyShape = shape.value.xyShape;

|

||||

let totalY = xyShape.ys.reduce((a, b) => a + b);

|

||||

let total = 0;

|

||||

let cdf = xyShape.ys.map(y => {

|

||||

total += y;

|

||||

return total / totalY;

|

||||

})

|

||||

let values = _.zip(cdf, xyShape.xs, xyShape.ys).map(([c, x, y ]) => ({cdf: (c * 100).toFixed(2) + "%", x: x, y: y}));

|

||||

|

||||

return (

|

||||

<SquiggleVegaChart

|

||||

data={{"con": values}}

|

||||

/>

|

||||

);

|

||||

}

|

||||

else if(shape.tag === "Discrete"){

|

||||

let xyShape = shape.value.xyShape;

|

||||

let totalY = xyShape.ys.reduce((a, b) => a + b);

|

||||

let total = 0;

|

||||

let cdf = xyShape.ys.map(y => {

|

||||

total += y;

|

||||

return total / totalY;

|

||||

})

|

||||

let values = _.zip(cdf, xyShape.xs, xyShape.ys).map(([c, x,y]) => ({cdf: (c * 100).toFixed(2) + "%", x: x, y: y}));

|

||||

|

||||

return (

|

||||

<SquiggleVegaChart

|

||||

data={{"dis": values}}

|

||||

/>

|

||||

);

|

||||

}

|

||||

else if(shape.tag === "Mixed"){

|

||||

let discreteShape = shape.value.discrete.xyShape;

|

||||

let totalDiscrete = discreteShape.ys.reduce((a, b) => a + b);

|

||||

|

||||

let discretePoints = _.zip(discreteShape.xs, discreteShape.ys);

|

||||

let continuousShape = shape.value.continuous.xyShape;

|

||||

let continuousPoints = _.zip(continuousShape.xs, continuousShape.ys);

|

||||

|

||||

interface labeledPoint {

|

||||

x: number,

|

||||

y: number,

|

||||

type: "discrete" | "continuous"

|

||||

};

|

||||

|

||||

let markedDisPoints : labeledPoint[] = discretePoints.map(([x,y]) => ({x: x, y: y, type: "discrete"}))

|

||||

let markedConPoints : labeledPoint[] = continuousPoints.map(([x,y]) => ({x: x, y: y, type: "continuous"}))

|

||||

|

||||

let sortedPoints = _.sortBy(markedDisPoints.concat(markedConPoints), 'x')

|

||||

|

||||

let totalContinuous = 1 - totalDiscrete;

|

||||

let totalY = continuousShape.ys.reduce((a:number, b:number) => a + b);

|

||||

|

||||

let total = 0;

|

||||

let cdf = sortedPoints.map((point: labeledPoint) => {

|

||||

if(point.type == "discrete") {

|

||||

total += point.y;

|

||||

return total;

|

||||

}

|

||||

else if (point.type == "continuous") {

|

||||

total += point.y / totalY * totalContinuous;

|

||||

return total;

|

||||

}

|

||||

});

|

||||

|

||||

interface cdfLabeledPoint {

|

||||

cdf: string,

|

||||

x: number,

|

||||

y: number,

|

||||

type: "discrete" | "continuous"

|

||||

}

|

||||

let cdfLabeledPoint : cdfLabeledPoint[] = _.zipWith(cdf, sortedPoints, (c: number, point: labeledPoint) => ({...point, cdf: (c * 100).toFixed(2) + "%"}))

|

||||

let continuousValues = cdfLabeledPoint.filter(x => x.type == "continuous")

|

||||

let discreteValues = cdfLabeledPoint.filter(x => x.type == "discrete")

|

||||

|

||||

return (

|

||||

<SquiggleVegaChart

|

||||

data={{"con": continuousValues, "dis": discreteValues}}

|

||||

/>

|

||||

);

|

||||

}

|

||||

}

|

||||

else if(chartResult.NAME === "Function"){

|

||||

// We are looking at a function. In this case, we draw a Percentiles chart

|

||||

let start = props.diagramStart ? props.diagramStart : 0

|

||||

let stop = props.diagramStop ? props.diagramStop : 10

|

||||

let count = props.diagramCount ? props.diagramCount : 0.1

|

||||

let step = (stop - start)/ count

|

||||

let data = _.range(start, stop, step).map(x => {

|

||||

if(chartResult.NAME=="Function"){

|

||||

let result = chartResult.VAL(x);

|

||||

if(result.tag == "Ok"){

|

||||

let percentileArray = [

|

||||

0.01,

|

||||

0.05,

|

||||

0.1,

|

||||

0.2,

|

||||

0.3,

|

||||

0.4,

|

||||

0.5,

|

||||

0.6,

|

||||

0.7,

|

||||

0.8,

|

||||

0.9,

|

||||

0.95,

|

||||

0.99

|

||||

]

|

||||

|

||||

let percentiles = getPercentiles(percentileArray, result.value);

|

||||

return {

|

||||

"x": x,

|

||||

"p1": percentiles[0],

|

||||

"p5": percentiles[1],

|

||||

"p10": percentiles[2],

|

||||

"p20": percentiles[3],

|

||||

"p30": percentiles[4],

|

||||

"p40": percentiles[5],

|

||||

"p50": percentiles[6],

|

||||

"p60": percentiles[7],

|

||||

"p70": percentiles[8],

|

||||

"p80": percentiles[9],

|

||||

"p90": percentiles[10],

|

||||

"p95": percentiles[11],

|

||||

"p99": percentiles[12]

|

||||

}

|

||||

|

||||

}

|

||||

|

||||

}

|

||||

return 0;

|

||||

})

|

||||

return <SquigglePercentilesChart data={{"facet": data}} />

|

||||

}

|

||||

})

|

||||

return <>{chartResults}</>;

|

||||

}

|

||||

else if(result.tag == "Error") {

|

||||

// At this point, we came across an error. What was our error?

|

||||

return (<p>{"Error parsing Squiggle: " + result.value}</p>)

|

||||

|

||||

}

|

||||

return (<p>{"Invalid Response"}</p>)

|

||||

};

|

||||

|

||||

function getPercentiles(percentiles:number[], t : DistPlus) {

|

||||

if(t.pointSetDist.tag == "Discrete") {

|

||||

let total = 0;

|

||||

let maxX = _.max(t.pointSetDist.value.xyShape.xs)

|

||||

let bounds = percentiles.map(_ => maxX);

|

||||

_.zipWith(t.pointSetDist.value.xyShape.xs,t.pointSetDist.value.xyShape.ys, (x,y) => {

|

||||

total += y

|

||||

percentiles.forEach((v, i) => {

|

||||

if(total > v && bounds[i] == maxX){

|

||||

bounds[i] = x

|

||||

}

|

||||

})

|

||||

});

|

||||

return bounds;

|

||||

}

|

||||

else if(t.pointSetDist.tag == "Continuous"){

|

||||

let total = 0;

|

||||

let maxX = _.max(t.pointSetDist.value.xyShape.xs)

|

||||

let totalY = _.sum(t.pointSetDist.value.xyShape.ys)

|

||||

let bounds = percentiles.map(_ => maxX);

|

||||

_.zipWith(t.pointSetDist.value.xyShape.xs,t.pointSetDist.value.xyShape.ys, (x,y) => {

|

||||

total += y / totalY;

|

||||

percentiles.forEach((v, i) => {

|

||||

if(total > v && bounds[i] == maxX){

|

||||

bounds[i] = x

|

||||

}

|

||||

})

|

||||

});

|

||||

return bounds;

|

||||

}

|

||||

else if(t.pointSetDist.tag == "Mixed"){

|

||||

let discreteShape = t.pointSetDist.value.discrete.xyShape;

|

||||

let totalDiscrete = discreteShape.ys.reduce((a, b) => a + b);

|

||||

|

||||

let discretePoints = _.zip(discreteShape.xs, discreteShape.ys);

|

||||

let continuousShape = t.pointSetDist.value.continuous.xyShape;

|

||||

let continuousPoints = _.zip(continuousShape.xs, continuousShape.ys);

|

||||

|

||||

interface labeledPoint {

|

||||

x: number,

|

||||

y: number,

|

||||

type: "discrete" | "continuous"

|

||||

};

|

||||

|

||||

let markedDisPoints : labeledPoint[] = discretePoints.map(([x,y]) => ({x: x, y: y, type: "discrete"}))

|

||||

let markedConPoints : labeledPoint[] = continuousPoints.map(([x,y]) => ({x: x, y: y, type: "continuous"}))

|

||||

|

||||

let sortedPoints = _.sortBy(markedDisPoints.concat(markedConPoints), 'x')

|

||||

|

||||

let totalContinuous = 1 - totalDiscrete;

|

||||

let totalY = continuousShape.ys.reduce((a:number, b:number) => a + b);

|

||||

|

||||

let total = 0;

|

||||

let maxX = _.max(sortedPoints.map(x => x.x));

|

||||

let bounds = percentiles.map(_ => maxX);

|

||||

sortedPoints.map((point: labeledPoint) => {

|

||||

if(point.type == "discrete") {

|

||||

total += point.y;

|

||||

}

|

||||

else if (point.type == "continuous") {

|

||||

total += point.y / totalY * totalContinuous;

|

||||

}

|

||||

percentiles.forEach((v,i) => {

|

||||

if(total > v && bounds[i] == maxX){

|

||||

bounds[i] = total;

|

||||

}

|

||||

})

|

||||

return total;

|

||||

});

|

||||

return bounds;

|

||||

}

|

||||

}

|

||||

|

||||

function MakeNumberShower(props: {number: number, precision :number}){

|

||||

let numberWithPresentation = numberShow(props.number, props.precision);

|

||||

return (

|

||||

<span>

|

||||

{numberWithPresentation.value}

|

||||

{numberWithPresentation.symbol}

|

||||

{numberWithPresentation.power ?

|

||||

<span>

|

||||

{'\u00b710'}

|

||||

<span style={{fontSize: "0.6em", verticalAlign: "super"}}>

|

||||

{numberWithPresentation.power}

|

||||

</span>

|

||||

</span>

|

||||

: <></>}

|

||||

</span>

|

||||

|

||||

);

|

||||

|

||||

}

|

||||

|

||||

const orderOfMagnitudeNum = (n:number) => {

|

||||

return Math.pow(10, n);

|

||||

};

|

||||

|

||||

// 105 -> 3

|

||||

const orderOfMagnitude = (n:number) => {

|

||||

return Math.floor(Math.log(n) / Math.LN10 + 0.000000001);

|

||||

};

|

||||

|

||||

function withXSigFigs(number:number, sigFigs:number) {

|

||||

const withPrecision = number.toPrecision(sigFigs);

|

||||

const formatted = Number(withPrecision);

|

||||

return `${formatted}`;

|

||||

}

|

||||

|

||||

class NumberShower {

|

||||

number: number

|

||||

precision: number

|

||||

|

||||

constructor(number:number, precision = 2) {

|

||||

this.number = number;

|

||||

this.precision = precision;

|

||||

}

|

||||

|

||||

convert() {

|

||||

const number = Math.abs(this.number);

|

||||

const response = this.evaluate(number);

|

||||

if (this.number < 0) {

|

||||

response.value = '-' + response.value;

|

||||

}

|

||||

return response

|

||||

}

|

||||

|

||||

metricSystem(number: number, order: number) {

|

||||

const newNumber = number / orderOfMagnitudeNum(order);

|

||||

const precision = this.precision;

|

||||

return `${withXSigFigs(newNumber, precision)}`;

|

||||

}

|

||||

|

||||

evaluate(number: number) {

|

||||

if (number === 0) {

|

||||

return { value: this.metricSystem(0, 0) }

|

||||

}

|

||||

|

||||

const order = orderOfMagnitude(number);

|

||||

if (order < -2) {

|

||||

return { value: this.metricSystem(number, order), power: order };

|

||||

} else if (order < 4) {

|

||||

return { value: this.metricSystem(number, 0) };

|

||||

} else if (order < 6) {

|

||||

return { value: this.metricSystem(number, 3), symbol: 'K' };

|

||||

} else if (order < 9) {

|

||||

return { value: this.metricSystem(number, 6), symbol: 'M' };

|

||||

} else if (order < 12) {

|

||||

return { value: this.metricSystem(number, 9), symbol: 'B' };

|

||||

} else if (order < 15) {

|

||||

return { value: this.metricSystem(number, 12), symbol: 'T' };

|

||||

} else {

|

||||

return { value: this.metricSystem(number, order), power: order };

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

export function numberShow(number: number, precision = 2) {

|

||||

const ns = new NumberShower(number, precision);

|

||||

return ns.convert();

|

||||

}

|

||||

1

packages/components/src/index.ts

Normal file

|

|

@ -0,0 +1 @@

|

|||

export { SquiggleChart } from './SquiggleChart';

|

||||

122

packages/components/src/spec-distributions.json

Normal file

|

|

@ -0,0 +1,122 @@

|

|||

{

|

||||

"$schema": "https://vega.github.io/schema/vega/v5.json",

|

||||

"description": "A basic area chart example.",

|

||||

"width": 500,

|

||||

"height": 200,

|

||||

"padding": 5,

|

||||

"data": [{"name": "con"}, {"name": "dis"}],

|

||||

|

||||

"signals": [

|

||||

{

|

||||

"name": "mousex",

|

||||

"description": "x position of mouse",

|

||||

"update": "0",

|

||||

"on": [{"events": "mousemove", "update": "1-x()/width"}]

|

||||

},

|

||||

{

|

||||

"name": "xscale",

|

||||

"description": "The transform of the x scale",

|

||||

"value": 1.0,

|

||||

"bind": {

|

||||

"input": "range",

|

||||

"min": 0.1,

|

||||

"max": 1

|

||||

}

|

||||

},

|

||||

{

|

||||

"name": "yscale",

|

||||

"description": "The transform of the y scale",

|

||||

"value": 1.0,

|

||||

"bind": {

|

||||

"input": "range",

|

||||

"min": 0.1,

|

||||

"max": 1

|

||||

}

|

||||

}

|

||||

],

|

||||

|

||||

"scales": [{

|

||||

"name": "xscale",

|

||||

"type": "pow",

|

||||

"exponent": {"signal": "xscale"},

|

||||

"range": "width",

|

||||

"zero": false,

|

||||

"nice": false,

|

||||

"domain": {

|

||||

"fields": [

|

||||

{ "data": "con", "field": "x"},

|

||||

{ "data": "dis", "field": "x"}

|

||||

]

|

||||

}

|

||||

}, {

|

||||

"name": "yscale",

|

||||

"type": "pow",

|

||||

"exponent": {"signal": "yscale"},

|

||||

"range": "height",

|

||||

"nice": true,

|

||||

"zero": true,

|

||||

"domain": {

|

||||

"fields": [

|

||||

{ "data": "con", "field": "y"},

|

||||

{ "data": "dis", "field": "y"}

|

||||

]

|

||||

}

|

||||

}

|

||||

],

|

||||

|

||||

"axes": [

|

||||

{"orient": "bottom", "scale": "xscale", "tickCount": 20},

|

||||

{"orient": "left", "scale": "yscale"}

|

||||

],

|

||||

|

||||

"marks": [

|

||||

{

|

||||

"type": "area",

|

||||

"from": {"data": "con"},

|

||||

"encode": {

|

||||

"enter": {

|

||||

"tooltip": {"signal": "datum.cdf"}

|

||||

},

|

||||

"update": {

|

||||

"x": {"scale": "xscale", "field": "x"},

|

||||

"y": {"scale": "yscale", "field": "y"},

|

||||

"y2": {"scale": "yscale", "value": 0},

|

||||

"fill": {

|

||||

"signal": "{gradient: 'linear', x1: 1, y1: 1, x2: 0, y2: 1, stops: [ {offset: 0.0, color: 'steelblue'}, {offset: clamp(mousex, 0, 1), color: 'steelblue'}, {offset: clamp(mousex, 0, 1), color: 'blue'}, {offset: 1.0, color: 'blue'} ] }"

|

||||

},

|

||||

"interpolate": {"value": "monotone"},

|

||||

"fillOpacity": {"value": 1}

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"type": "rect",

|

||||

"from": {"data": "dis"},

|

||||

"encode": {

|

||||

"enter": {

|

||||

"y2": {"scale": "yscale", "value": 0},

|

||||

"width": {"value": 1}

|

||||

},

|

||||

"update": {

|

||||

"x": {"scale": "xscale", "field": "x"},

|

||||

"y": {"scale": "yscale", "field": "y"}

|

||||

}

|

||||

}

|

||||

},

|

||||

{

|

||||

"type": "symbol",

|

||||

"from": {"data": "dis"},

|

||||

"encode": {

|

||||

"enter": {

|

||||

"shape": {"value": "circle"},

|

||||

"width": {"value": 5},

|

||||

"tooltip": {"signal": "datum.y"}

|

||||

},

|

||||

"update": {

|

||||

"x": {"scale": "xscale", "field": "x"},

|

||||

"y": {"scale": "yscale", "field": "y"}

|

||||

}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

9

packages/components/src/stories/Introduction.stories.mdx

Normal file

|

|

@ -0,0 +1,9 @@

|

|||

import { Meta } from '@storybook/addon-docs';

|

||||

|

||||

<Meta title="Squiggle/Introduction" />

|

||||

|

||||

This is the component library for Squiggle. All of these components are react

|

||||

components, and can be used in any application that you see fit.

|

||||

|

||||

Currently, the only component that is provided is the SquiggleChart component.

|

||||

This component allows you to render the result of a squiggle expression.

|

||||

|

|

@ -0,0 +1 @@

|

|||

{"version":3,"file":"SquiggleChart.stories.js","sourceRoot":"","sources":["SquiggleChart.stories.tsx"],"names":[],"mappings":";;;AAAA,6BAA8B;AAC9B,iDAA+C;AAG/C,qBAAe;IACb,KAAK,EAAE,uBAAuB;IAC9B,SAAS,EAAE,6BAAa;CACzB,CAAA;AAED,IAAM,QAAQ,GAAG,UAAC,EAAgB;QAAf,cAAc,oBAAA;IAAM,OAAA,oBAAC,6BAAa,IAAC,cAAc,EAAE,cAAc,GAAI;AAAjD,CAAiD,CAAA;AAE3E,QAAA,OAAO,GAAG,QAAQ,CAAC,IAAI,CAAC,EAAE,CAAC,CAAA;AACxC,eAAO,CAAC,IAAI,GAAG;IACb,cAAc,EAAE,cAAc;CAC/B,CAAC"}

|

||||

82

packages/components/src/stories/SquiggleChart.stories.mdx

Normal file

|

|

@ -0,0 +1,82 @@

|

|||

import { SquiggleChart } from '../SquiggleChart'

|

||||

import { Canvas, Meta, Story, Props } from '@storybook/addon-docs';

|

||||

|

||||

<Meta title="Squiggle/SquiggleChart" component={ SquiggleChart } />

|

||||

|

||||

export const Template = SquiggleChart

|

||||

|

||||